尤度関数を多次元正規分布で仮定する(アヤメデータの場合、d=4(Sepal.Length, Sepal.Width, Petal.Length, Petal.Width))

識別関数はベイズの定理を使い、上の尤度関数の対数をとって用い、-1を両辺にかけることで

識別規則は、

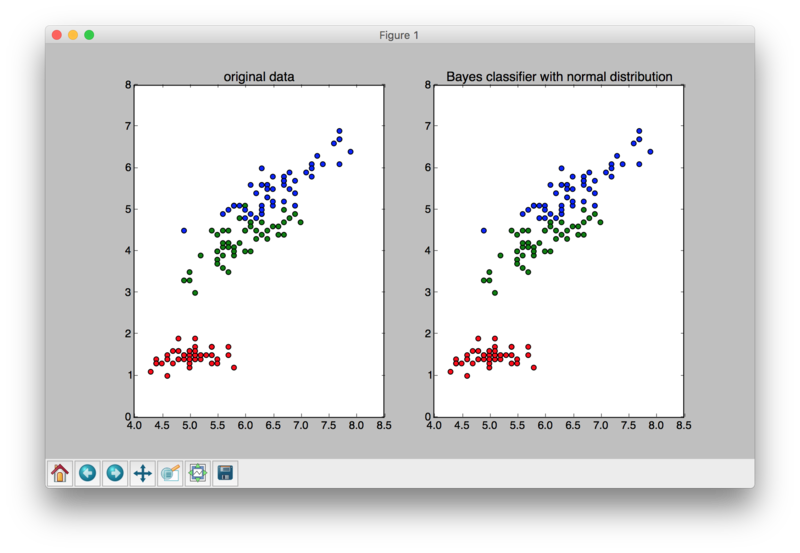

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets import math from numpy import dot import pdb iris = datasets.load_iris() data = iris.data target = iris.target Pc1 = 1/3.0 # a priori probability Pc2 = 1/3.0 Pc3 = 1/3.0 c1_indices = np.where(target==0)[0].tolist() c2_indices = np.where(target==1)[0].tolist() c3_indices = np.where(target==2)[0].tolist() c1=[] c2=[] c3=[] for idx in c1_indices: c1.append(data[idx]) for idx in c2_indices: c2.append(data[idx]) for idx in c3_indices: c3.append(data[idx]) c1a = np.array(c1) c2a = np.array(c2) c3a = np.array(c3) mu1 = sum(c1) /len(c1) mu2 = sum(c2) /len(c2) mu3 = sum(c3) /len(c3) mu1s = mu1*np.ones((len(c1a),1)) mu2s = mu2*np.ones((len(c2a),1)) mu3s = mu3*np.ones((len(c3a),1)) S1 = np.zeros((4,4)) for i in range(4): for j in range(4): s = sum((c1a[:,i] - mu1s[:,i])*(c1a[:,j]-mu1s[:,j])) cov = s/len(data) S1[i,j] = cov S2 = np.zeros((4,4)) for i in range(4): for j in range(4): s = sum((c2a[:,i] - mu2s[:,i])*(c2a[:,j]-mu2s[:,j])) cov = s/len(data) S2[i,j] = cov S3 = np.zeros((4,4)) for i in range(4): for j in range(4): s = sum((c3a[:,i] - mu3s[:,i])*(c3a[:,j]-mu3s[:,j])) cov = s/len(data) S3[i,j] = cov def g1(x): A=np.matrix(x).T-np.matrix(mu1).T B=np.linalg.inv(S1) C=A.T detS1 = np.linalg.det(S1) result = dot(dot(C,B),A) + np.log(detS1) - 2*math.log(Pc1) return result.item() def g2(x): A=np.matrix(x).T-np.matrix(mu2).T B=np.linalg.inv(S2) C=A.T detS2 = np.linalg.det(S2) result = dot(dot(C,B),A) + np.log(detS2) - 2*math.log(Pc2) return result.item() def g3(x): A=np.matrix(x).T-np.matrix(mu3).T B=np.linalg.inv(S3) C=A.T detS3 = np.linalg.det(S3) result = dot(dot(C,B),A) + np.log(detS3) - 2*math.log(Pc3) return result.item() def classify(x): map1inv = g1(x) map2inv = g2(x) map3inv = g3(x) mapinvs = np.array([map1inv,map2inv,map3inv]) result = np.where(mapinvs==min(mapinvs))[0].tolist()[0] return result results = [] for d in data: res = classify(d) results.append(res) def makeColor(labels): colors=[] for l in labels: if l==0: colors.append('r') elif l==1: colors.append('g') elif l==2: colors.append('b') return colors def calcPrecision(target,results): success = 0 fail = 0 for i in range(len(target)): if target[i]==results[i]: success = success + 1 else: fail = fail+1 return success/float(success+fail)*100 precision = calcPrecision(target,results) print 'success rate: ',precision,'%' plt.subplot(121) xs = data[:,0] ys = data[:,2] s = [30 for i in range(len(xs))] colors = makeColor(target) plt.scatter(xs,ys,s,colors) plt.title('original data') plt.subplot(122) colors = makeColor(results) plt.scatter(xs,ys,s,colors) plt.title('Bayes classifier with normal distribution') plt.show()

success rate: 98.6666666667 %